AWS Lambda vs Containers: Making the Right Choice in Modern Cloud Architectures

AWS Lambda vs Containers: Making the Right Choice in Modern Cloud Architectures

Like many Kubernetes (K8s) users, you might have recently found yourself dealing with operational headaches and high costs. The complexity of operating the technology, coupled with the fact that fine-grained controls aren't always necessary, drives the search for alternatives.

In this article, we will examine whether AWS Lambda (or Serverless architecture in general) is a viable alternative to containers. While the study focuses on AWS, the findings are generally applicable to other Cloud Service Providers (CSPs) as well.

Introduction

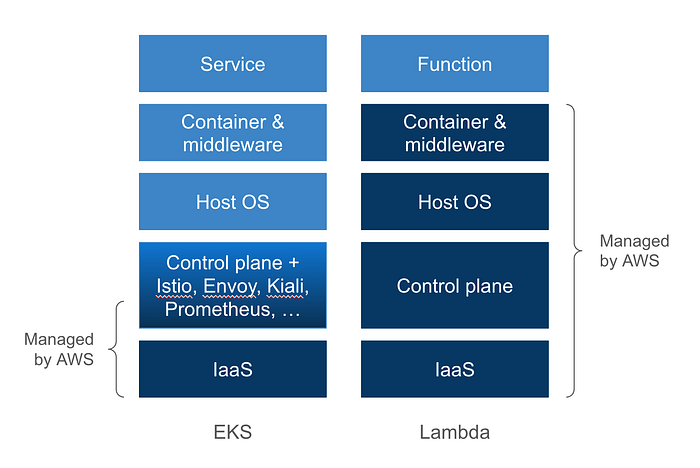

A container essentially functions as a lightweight Virtual Machine (VM). It bundles your application code with the necessary Operating System (OS) and middleware packages. The Docker image you test on your local machine is the same one deployed in production. In a production environment, Kubernetes is responsible for deploying and managing these containers at scale.

However, this approach requires significant engineering effort. You need to define the host pool, distribute them across different Availability Zones for resilience, and optimize the pool size to control costs.

On the other hand, AWS Lambda operates on a serverless application model. You break your application into functions, zip the code and its dependencies, and upload it to AWS. AWS deploys the necessary infrastructure on demand, keeps the OS and middleware up to date, and balances the load automatically. Most importantly, you only pay when your functions are actually running.

Cost Perspective

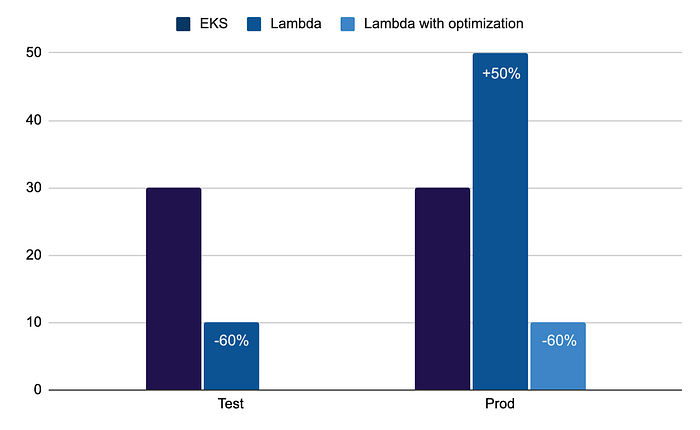

When utilizing containers, you must provision adequate capacity to handle incoming traffic. Although Kubernetes assists in autoscaling, there is always a fixed cost for "idle capacity".

Lambda, however, is charged based on usage. It closely follows your traffic curve; if there is no traffic, the cost is zero. This proves advantageous for test systems or environments with low nighttime activity.

However, for functions with low CPU consumption but high and consistent call volume (e.g., billions of calls per month), containers might be more cost-effective. For instance, an analysis showed that migrating services to Lambda in production could increase costs by 50%, while reducing costs by 3 times in a test environment.

Cold Start and Mitigation Strategies

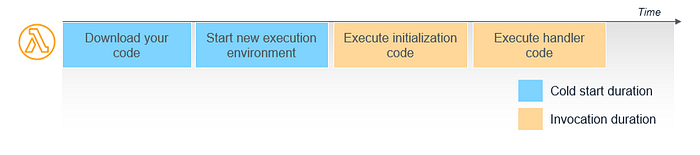

One caveat with Lambda is the "Cold Start" issue. If there is no available execution environment when a request arrives, AWS initiates a new one and loads your code. In languages like Node.js or Python, this latency is generally acceptable (a few hundred milliseconds).

However, with Java and Spring libraries, this can take seconds. AWS introduced 'SnapStart' for Java, significantly reducing this time (e.g., from 6 seconds to 200 milliseconds).

If you require a guaranteed response time, you can use the "Provisioned Concurrency" option, though this moves away from the pure pay-as-you-go model.

Operational Constraints

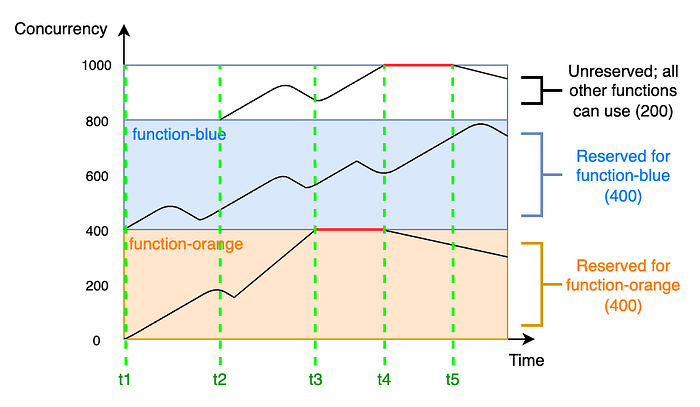

AWS manages the scalability of your Lambda, but it is not unlimited. Since you are working on shared infrastructure, AWS implements certain quotas to safeguard the region.

- Concurrent Execution Quota: Default is 1000 per region (can be increased).

- Burst Rate: 1000 new execution environments every 10 seconds.

If these limits are exceeded, requests may be queued or timed out.

Points of Attention

When migrating existing microservices to a Lambda model, consider the following:

- Package Size: There are limits on Lambda package size (e.g., 250MB). Include only essential dependencies.

- Database Connections: Since functions process requests individually, traditional connection pools can be inefficient. Using a database proxy (e.g., Amazon RDS Proxy) might be necessary.

- Execution Time: The maximum execution time is 15 minutes. It is not suitable for long-running processes or HPC (High-Performance Computing); in such cases, EC2 should be preferred.

Conclusion

AWS Lambda and the serverless model prove highly advantageous for greenfield development, offering operational simplicity and low initial costs. However, for applications requiring fine-grained control, high and consistent loads, or legacy systems, Containers and Kubernetes may still be the better choice. Choosing the right tool for the job is key in modern cloud architecture.